Engineering Predictive Certainty.

Strategy is often lost in the transition from raw data to production code. We bypass the friction with a four-stage ledger-based approach designed for precision, visibility, and measurable business impact.

The Integrity Audit

Before a single model is built, we examine the structural integrity of your data. This is not a surface-level glance; we evaluate provenance, drift patterns, and schema consistency to ensure your predictive analytics rest on a stable foundation.

Critical Checkpoints

- — Historical Bias Detection

- — Pipeline Latency Mapping

- — Feature Availability Scoring

Schema Alignment

We map how disparate data sources interact. By identifying silos early, we prevent the "garbage-in, garbage-out" cycle that plagues many data science initiatives in large-scale Thai enterprises.

Gap Mitigation

If data is missing for specific predictive goals, we don't guess. We design synthetic data bridges or acquisition strategies to ensure the model has the signals it requires to function accurately.

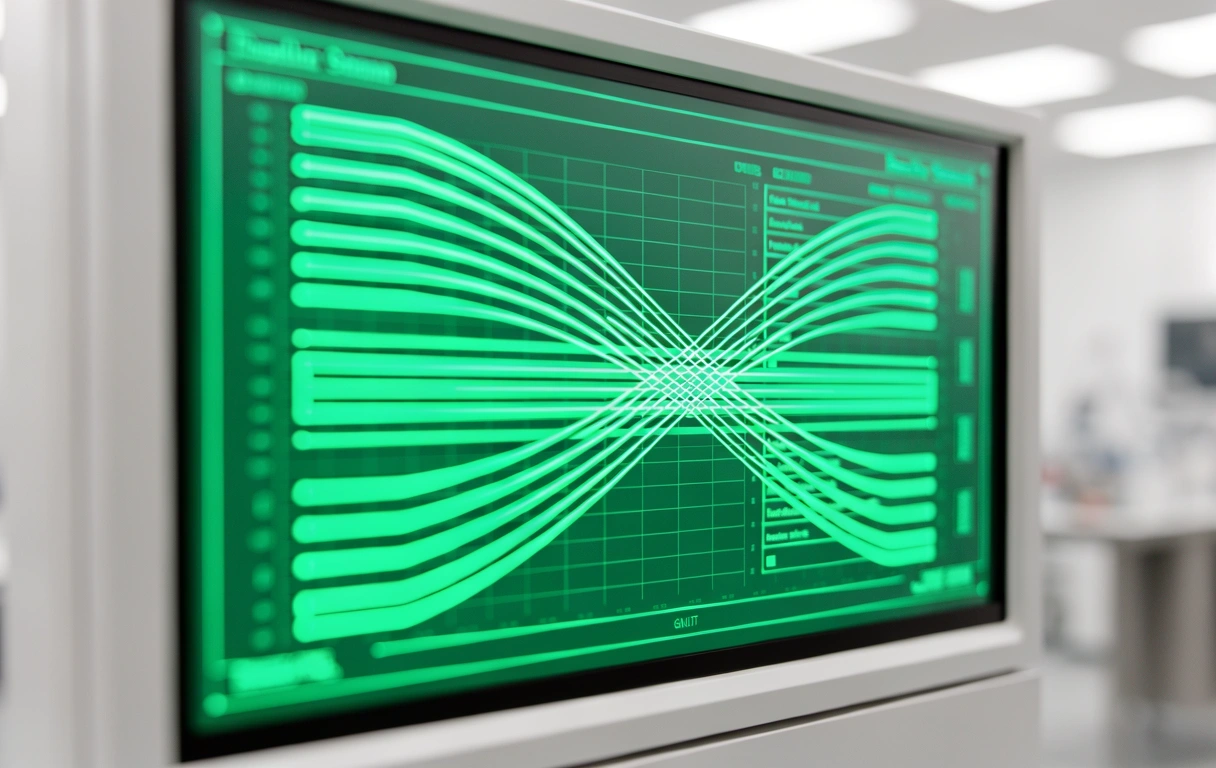

Model Logic vs. Business Reality

How we bridge the gap between algorithmic accuracy and operational utility.

Experimental Synthesis

Our data science team develops bespoke architectures tailored to your specific constraints. We prioritize explainability—ensuring that your leadership understands why a model makes a specific prediction.

- Rapid prototyping of multiple regression and classification nodes.

- Cross-validation against regional market volatility benchmarks.

Production Hardening

A model in a notebook is a theory. A model in production is a tool. We transform prototypes into robust APIs and integrated microservices that handle real-time traffic without degrading performance.

- Containerized deployment for cloud or on-premise Bangkok servers.

- Automated monitoring for concept drift and performance decay.

Continuous Insight Extraction

The engagement doesn't end at deployment. We establish a feedback loop where predictive analytics results influence future strategic adjustments.

Performance Reviews

Bi-weekly calibration sessions to ensure the model is meeting the KPIs defined during the initial audit phase.

Knowledge Transfer

We don't believe in black boxes. We train your internal teams to interpret outputs and manage basic model maintenance.

Adaptive Scaling

As your business grows from local Bangkok operations to international markets, we scale the data pipelines accordingly.

Data is a liability until it is a Decision.

Our methodology is built for companies that have outgrown Excel and off-the-shelf software. We provide the custom engineering required to turn complexity into a competitive advantage.

Engagement Transparency

Revision Guide 2.4How long does a typical data audit take?

Depending on the complexity of your stack, a thorough audit takes between 10 to 20 working days. We provide a comprehensive report detailing data health, missing variables, and a feasibility score for your proposed predictive goals.

Do you work with non-digital data?

We specialize in digitizing and structuring physical archives if they contain historical signals necessary for predictive analytics. Our team in Bangkok can assist in designing the ingestion pipelines for legacy formats.

What happens if a model loses accuracy?

"Concept Drift" is inevitable. Our deployment includes a monitoring layer that alerts both our team and yours if performance drops below a pre-set threshold, allowing for rapid retraining or feature adjustment.

Ownership of the algorithms?

Unlike many "Science as a Service" platforms, Tokyo Data Science clients retain full intellectual property of the bespoke models and pipelines developed during the engagement.